Introduction

In the early 2000s, point cloud rendering, particularly with point splatting, was a well-researched area in computer graphics. Simultaneously, image-based rendering approaches grew in popularity.

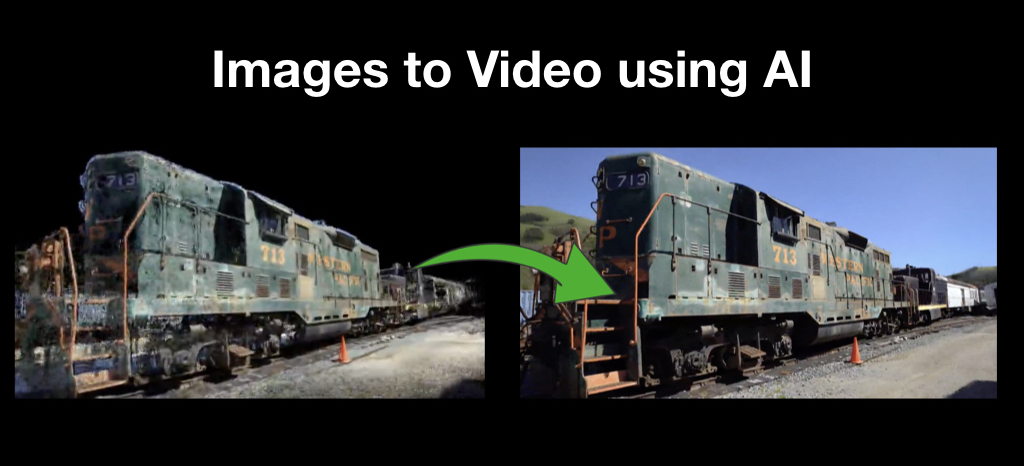

Novel perspectives are synthesized based on a limited, reconstructed 3D model as well as a registered series of photos of an item. These techniques suffer from input imprecision; for example, ghosting artifacts emerge if the geometry has holes or if the input pictures are not completely aligned.

Approaches based on neural image rendering, such as, employ neural networks to ignore these imperfections and can generate photo-realistic new perspectives of exceptional quality.

What is ADOP

(Approximate Differentiable One-Pixel Point Rendering)?

Paper By: Darius Rückert, Linus Franke, Marc Stamminger

This study demonstrates combining a standard point rasterizer with a deep neural network. This is especially useful in the field of 3D reconstruction. The technique extends and enhances the pipeline in a variety of ways.

They incorporate a physical, differentiable camera model, and a differentiable tone mapper, as well as a formulation for improving approximation of the spatial gradient of one-pixel point rasterization. This distinct process enables us to improve not only the neural point features, but also to rectify imprecisions in the input during the training stage.

So, depending on the visual loss from the neural rendering network, the system modifies the camera posture and model, and calculates per-image exposure and white-balance values, along with a vignetting model and sensor response curve per camera.

Rendering quality is greatly enhanced because of this cleaned and rectified input. Furthermore, our technique can generate arbitrary HDR and LDR settings and rectify under- or overexposed views. Finally, brightness and color variations are now handled separately by a physically accurate sensor model; the number of parameters inside the deep neural network may be greatly reduced.

Important considerations

- An end-to-end trainable point-based neural rendering pipeline for scene refining and visualization.

- A differentiable rasterizer for one-pixel point splats based on the idea of ghost geometry.

- A distinct physically-based tonemapper that simulates the lens and sensor effects of digital photography.

- A stochastic point discarding strategy for effective multi-layer rendering of huge point clouds.

- An open source implementation of the suggested method that is simple to use and adapts to new challenges.

How does it work?

They present a one-of-a-kind point-based, distinct neural rendering pipeline for scene refinement and novel view synthesis. As input, a preliminary estimate of the point cloud and camera parameters is supplied. The result is a set of images generated by random camera poses.

A differentiable renderer uses multi-resolution one-pixel point rasterization to produce the point cloud. The unique notion of ghost geometry is used to approximate the spatial gradients of discrete rasterization. The neural picture pyramid is then fed via a deep neural network for shading calculations and hole filling after rendering.

The intermediate output is finally converted to the target picture via a differentiable, physically-based tonemapper. We optimize all of the scene's characteristics, including camera model, camera pose, point location, point color, environment map, rendering network weights, vignetting, camera response function, per picture exposure, and per image white balance, since all stages of the pipeline are differentiable.

Because the original reconstruction is modified throughout training, they demonstrate that their system can synthesize sharper and more consistent unique viewpoints than previous systems. We can employ any camera model and display scenes with well over 100M points in real time thanks to the efficient one-pixel point rasterization.

Modules Used

1. Point-Based Rendering

For a long time, point-based rendering has piqued the curiosity of computer graphics researchers. The first significant option is to depict points as oriented discs, which are commonly called splats or surfels, with the radius of each disc precomputed from the density of the point cloud. The discs are drawn using a Gaussian alpha-mask and then merged with a normalizing mix function to remove noticeable artifacts between surrounding splats. Auto Splats, a technique for automatically calculating oriented and sized splats, is one example of a surfel rendering enhancement.

Point sample rendering is the second main technique to point-based graphics, in which points are displayed as one-pixel splats, resulting in a sparse representation of the scene. As a post-processing phase, the final picture is reassembled using iterative or pyramid-based hole filling techniques. Points with comparable depth values can be mixed during rendering to decrease aliasing in moving situations.

2. Novel View Synthesis

Traditional novel view synthesis, which is closely connected to image-based rendering (IBR), is based on the basic premise of bending colors from one frame to the next. One method is to use a triangle-mesh proxy to directly warp the picture colors to a new perspective.

Novel view synthesis can also be accomplished by recreating a 3D model of the scene and rendering it from novel views. Despite this, it develops a neural texture on a triangular model that can be drawn using standard rasterization. A deep neural network then converts the rasterized picture to RGB.

It has also been demonstrated that point clouds are acceptable geometric proxies for innovative vision synthesis. Neural Point-based Graphics (NPBG), which is closely linked to our technology, produces a point cloud with learned neural descriptors at different resolutions. A deep neural network then uses these photos to recreate the final output image.

3. Inverse Rendering

For a long time, inverse rendering and differentiable rendering have been studied. Major achievements, on the other hand, have only been made in recent years because of enhanced technology and deep learning advances. Inverse rendering is the process of estimating inherent scene properties from a single photo or a group of photographs.

While forecasting these properties from 2D projects is difficult, recent breakthroughs in this field have made significant progress toward tackling the challenge. The goal of inverse rendering is to estimate the physical qualities of a scene from an image, such as reflectance, geometry, and lighting.

Conclusion

Researchers have presented a novel differentiable neural point-based rendering technique. By projecting each point into imagespace and combining its neural description, a multiresolution neural picture is formed. To analyze this photograph and produce an HDR image of the scene, a deep convolutional neural network was employed. The whole source code is accessible here